AI Robot Tank Kit with Dual Vision Lidar, Python Programmable, Tracked Autonomous Navigation

+ €26.99 Shipping

AI Robot Tank Kit with Dual Vision Lidar, Python Programmable, Tracked Autonomous Navigation

- Brand: Unbranded

AI Robot Tank Kit with Dual Vision Lidar, Python Programmable, Tracked Autonomous Navigation

- Brand: Unbranded

Save €500.00 (31%)

RRP

14-Day Returns Policy

Save €500.00 (31%)

RRP

14-Day Returns Policy

Payment methods:

Description

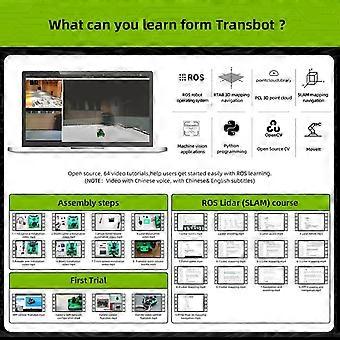

AI Robot Tank Kit with Dual Vision Lidar, Python Programmable, Tracked Autonomous Navigation

- Brand: Unbranded

- Category: Robotic Toys

- Fruugo ID: 472203558-987727331

- EAN: 6016494037655

Product Safety Information

Please see the product safety information specific to this product outlined below

The following information is provided by the independent third-party retailer selling this product.

Product Safety Labels

- Please use this product under adult supervision; keep away from fire

- high temperature and sharp objects to prevent product damage or safety hazards; if the product is damaged

- deformed or malfunctions

- stop using it immediately and dispose of it properly.

Delivery & Returns

Dispatched within 24 hours

-

STANDARD: €26.99 - Delivery between Tue 17 March 2026–Tue 31 March 2026

Shipping from China.

We do our best to ensure that the products that you order are delivered to you in full and according to your specifications. However, should you receive an incomplete order, or items different from the ones you ordered, or there is some other reason why you are not satisfied with the order, you may return the order, or any products included in the order, and receive a full refund for the items. View full return policy

Product Compliance Details

Please see the compliance information specific to this product outlined below.

The following information is provided by the independent third-party retailer selling this product.

Manufacturer

The following information outlines the contact details for the manufacturer of the relevant product sold on Fruugo.

- Guangzhou Fengshangye E-Commerce Co., Ltd.

- Guangzhou Fengshangye E-Commerce Co., Ltd.

- Room 103, Building 3, No. 3, Baichen Road

- Jinshan Village, Shiqi Town, Panyu District

- Guangzhou

- CN

- 511450

- AnNa.Yang62@hotmail.com

- 8618157319536

Responsible Person in the EU

The following information outlines the contact information for the responsible person in the EU. The responsible person is the designated economic operator based in the EU who is responsible for the compliance obligations relating to the relevant product sold into the European Union.

- Apex CE Specialists GmbH

- Apex CE Specialists GmbH

- Grafenberger Allee 277

- Düsseldorf

- DE

- 40237

- Info@apex-ce.com

- 4921186392011